Step-by-Step Guide to Creating Intelligent Agents that Handle Repetitive QA Tasks Autonomously

The rise of intelligent agents in quality assurance addresses a fundamental challenge that has plagued software testing for decades: repetitive, error-prone tasks consuming enormous human effort while delivering inconsistent results.

Manual test execution requires testers to perform the same validations hundreds of times, script maintenance demands constant updates as applications change, defect triage involves repetitive log analysis and categorization, and regression testing repeats identical scenarios across every release cycle.

This comprehensive guide provides a step-by-step approach to creating an intelligent QA agent for scalable, autonomous quality assurance, enabling organizations to build custom agentic testing solutions tailored to specific testing needs while following proven design patterns that ensure reliability, maintainability, and continuous improvement.

Step 1: Define Agent Identity and Purpose

Clear definition prevents scope creep and ensures focused QA agent capabilities:

Specify QA Tasks Precisely

Test Generation Agents:

- Convert requirements into executable test cases automatically

- Analyze code changes to generate relevant test scenarios

- Identify edge cases through systematic exploration

- Generate test data matching production patterns

- Create API validation from endpoint documentation

Execution Agents:

- Run test suites across multiple environments autonomously

- Execute tests on diverse browser and device configurations

- Perform load testing with realistic user simulation

- Conduct security scans identifying vulnerabilities

- Validate accessibility compliance automatically

Defect Triage Agents:

- Categorize failures by type and severity automatically

- Assign bugs to appropriate owners based on code changes

- Identify duplicate issues through similarity analysis

- Prioritize defects by business impact and urgency

- Extract relevant diagnostic information from logs

Maintenance Agents:

- Repair broken test scripts through self-healing

- Update test cases reflecting application changes

- Optimize test suites removing redundancy

- Refactor tests improving maintainability

- Archive obsolete scenarios no longer relevant

Define Clear Boundaries

QA Agent Capabilities:

- Specific tasks the agent handles autonomously

- Decision-making authority within defined parameters

- Tool and system access permissions appropriate

- Data sources available for information gathering

- Output formats and communication channels

Agentic Testing Limitations:

- Tasks requiring human judgment and approval

- Situations triggering escalation to humans

- Boundaries preventing unintended actions

- Safety constraints protecting production systems

- Ethical guidelines governing agent behavior

Determine Interaction Modes

Input Channels:

- Text commands through chat interfaces

- Voice instructions via natural language processing

- GUI interactions for visual applications

- API calls from integrated systems

- Event-driven triggers from monitoring tools

Behavioral Patterns:

- Proactive QA agents initiate actions based on observations

- Reactive agents respond to explicit requests

- Hybrid agentic testing combining both patterns

- Scheduled operations running at specific intervals

- Continuous monitoring with threshold-based activation

Step 2: Understand Input and Perception

Accurate environment perception enables QA agents to generate actionable insights:

Identify Comprehensive Data Sources

Test Artifacts:

- Existing test case repositories and documentation

- Test execution history and results databases

- Coverage reports showing validated areas

- Test data sets and generation rules

- Screenshot and video recording archives

Application Information:

- Source code repositories tracking changes

- UI snapshots showing current interfaces

- API documentation and endpoint specifications

- Database schemas and data models

- Architecture diagrams and dependency maps

Operational Data:

- Log files from applications and infrastructure

- Performance metrics and telemetry

- Error reports and stack traces

- User behavior analytics

- Production incident records

Development Context:

- Pull request descriptions and code reviews

- Sprint planning documents and user stories

- Requirement specifications and acceptance criteria

- Defect tracking system contents

- CI/CD pipeline configurations and results

Design Input Processing Pipelines

Natural Language Processing:

- Parse user stories extracting testable requirements

- Understand conversational test instructions

- Interpret bug reports identifying key details

- Analyze documentation for validation needs

- Generate human-readable summaries from technical data

Telemetry Analysis:

- Process real-time application performance metrics

- Correlate events across distributed systems

- Identify anomalies and trends automatically

- Extract relevant signals from noisy data

- Aggregate information for pattern recognition

Event Processing:

- Monitor code commits triggering test generation

- Detect deployment events initiating validation

- Recognize failure patterns requiring attention

- Identify environment changes affecting tests

- Track threshold violations needing responses

Ensure Perception Accuracy

Validation Mechanisms:

- Cross-reference information from multiple sources

- Verify data quality and completeness

- Detect inconsistencies requiring resolution

- Handle missing or corrupted inputs gracefully

- Maintain confidence scores for perceived information

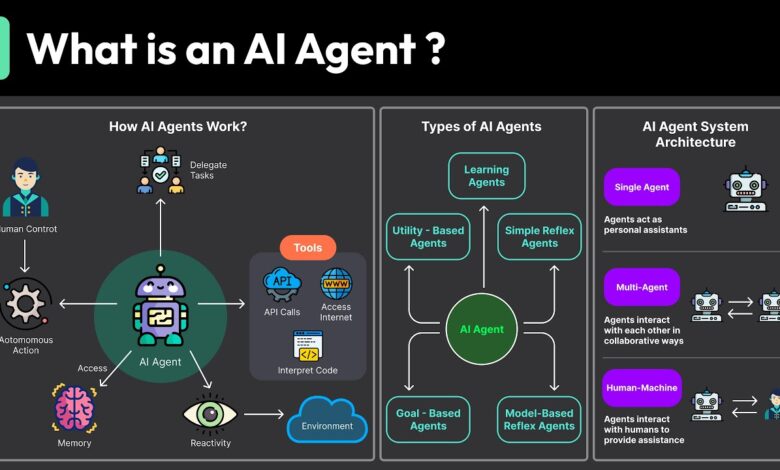

Step 3: Reasoning and Decision-Making Architecture

Intelligent reasoning enables autonomous problem-solving in agentic testing:

Select Appropriate AI/ML Models

Large Language Models (LLMs):

- Understand natural language requirements for QA agents

- Generate test case descriptions from specifications

- Interpret error messages identifying issues

- Create bug report narratives from diagnostic data

- Answer questions about test coverage and results

Reinforcement Learning:

- Optimize test execution strategies through trial

- Learn effective defect triage policies from feedback

- Improve test maintenance approaches over time

- Adapt to changing application characteristics

- Balance exploration of new strategies with exploitation of proven ones

Classification Models:

- Categorize test failures by root cause

- Predict defect severity from characteristics

- Identify test case relevance to code changes

- Classify bug reports by component ownership

- Determine test stability and flakiness likelihood

Regression Models:

- Predict test execution duration for scheduling

- Estimate defect density in code modules

- Forecast resource requirements for testing

- Project completion timelines for test suites

- Assess risk levels for releases

Develop Multi-Layer Reasoning

Perception Layer:

- Process raw inputs into structured representations

- Extract features relevant for decision-making

- Maintain situational awareness of testing state

- Track context across sequential interactions

- Update understanding based on new information

Analysis Layer:

- Evaluate current situation against objectives

- Identify problems requiring attention

- Assess multiple solution options

- Consider constraints and trade-offs

- Generate hypotheses about root causes

Decision Layer:

- Select optimal actions based on analysis

- Apply learned policies from experience

- Follow domain rules and heuristics

- Balance competing objectives appropriately

- Determine when human escalation needed

Incorporate Domain Knowledge

Testing Best Practices:

- Equivalence partitioning for input coverage

- Boundary value analysis for edge cases

- Risk-based prioritization strategies

- Test independence principles

- Data-driven testing approaches

Quality Heuristics:

- Complex code requires more testing

- Recent changes carry higher defect risk

- Critical features deserve proportional validation

- Frequent failures indicate systemic issues

- Performance degradation needs investigation

Organizational Context:

- Company-specific testing standards

- Regulatory compliance requirements

- Team workflow preferences

- Historical defect patterns

- Business priority alignment

Step 4: Task Planning and Execution

Translate QA agent decisions into effective action sequences:

Action Sequence Generation

Multi-Step Workflows:

- Decompose high-level goals into specific tasks

- Order actions respecting dependencies

- Parallelize independent operations

- Insert checkpoints for validation

- Define success criteria for each step

Example Agentic Testing Workflow:

- Parse requirement document extracting key functionality

- Identify testable conditions and acceptance criteria

- Generate test case outline covering all scenarios

- Create detailed test steps with expected results

- Generate appropriate test data for inputs

- Review generated tests for completeness

- Submit tests to management system

- Link tests to requirements for traceability

Orchestration Framework Integration

Workflow Engines:

- Temporal for durable execution with state management

- Apache Airflow for complex DAG-based workflows

- Prefect for Python-native orchestration

- Camunda for BPMN-based process automation

- Custom state machines for specific QA agent needs

Coordination Capabilities:

- Retry logic for transient failures

- Timeout handling for hanging operations

- Conditional branching based on results

- Loop constructs for iterative processing

- Error recovery and compensation actions

Tool and API Integration

Test Execution:

- Selenium WebDriver for browser automation

- Appium for mobile application testing

- REST Assured for API validation

- JMeter for performance testing

- Postman for API exploration

Test Management:

- TestRail API for test case operations

- Xray REST API for Jira integration

- Zephyr endpoints for test cycles

- qTest APIs for enterprise management

- Custom tool integrations via webhooks

CI/CD Systems:

- Jenkins pipeline trigger and status APIs

- GitHub Actions workflow dispatch

- GitLab CI pipeline operations

- Azure DevOps REST APIs

- CircleCI API endpoints

Bug Tracking:

- Jira issue creation and updates

- GitHub Issues API operations

- Azure Boards work item management

- Bugzilla XML-RPC interface

- Custom tracking system integration

Safe Execution Environments

Isolation Mechanisms:

- Containerized execution for test runs

- Sandbox environments for script validation

- Read-only production access where needed

- Network segmentation protecting critical systems

- Resource limits preventing runaway processes

Fallback Strategies:

- Default to safe actions when uncertain

- Escalate to humans for critical decisions

- Implement circuit breakers for repeated failures

- Provide manual override capabilities

- Maintain comprehensive audit logs

Step 5: Establish Continuous Learning and Feedback Loops

QA agents improve through experience and feedback:

Collect Performance Metrics

Execution Metrics:

- Test success and failure rates

- Execution time and resource utilization

- Coverage achieved per test run

- Defect detection effectiveness

- False positive and negative rates

Decision Quality:

- Accuracy of defect triage categorization

- Appropriateness of test prioritization

- Effectiveness of self-healing repairs

- Relevance of generated test scenarios

- Timeliness of escalations to humans

Business Impact:

- Reduction in manual testing effort

- Acceleration of release cycles

- Cost savings from automation

- Quality improvement in releases

- Developer productivity enhancements

Implement Self-Improvement Mechanisms

Supervised Learning:

- Human corrections train classification models

- Validated test cases improve generation quality

- Reviewed triage decisions refine categorization

- Approved healing actions strengthen repair logic

- Feedback on priorities optimizes future selections

Reinforcement Learning:

- Reward successful defect detection

- Penalize false positives and unnecessary work

- Encourage efficient resource utilization

- Optimize for coverage and execution speed balance

- Learn testing strategies through trial and feedback

Continuous Model Updates:

- Retrain models with accumulated data regularly

- Incorporate new test patterns and application changes

- Adapt to evolving codebase characteristics

- Update risk models based on recent defects

- Refine decision policies through analysis

Enable Human-in-the-Loop Supervision

Review Interfaces:

- Dashboard showing QA agent decisions and reasoning

- Approval workflows for critical actions

- Override capabilities for incorrect choices

- Feedback mechanisms for continuous improvement

- Explanation views justifying agent decisions

Escalation Triggers:

- Low confidence scores require human review

- Novel situations not seen during training

- High-impact decisions needing validation

- Conflicting signals from data sources

- Policy violations or ethical concerns

Training Opportunities:

- Complex cases become supervised learning examples

- Human decisions teach agents correct approaches

- Domain expert knowledge transfers to models

- Edge cases improve agent robustness

- Organizational preferences get encoded

Step 6: Design Action and Integration Layer

Connect QA agents to organizational testing infrastructure:

System Integrations

Test Management Platforms:

- Create, update, and execute test cases

- Link tests to requirements and user stories

- Retrieve test execution history

- Update test status and results

- Generate coverage reports

CI/CD Pipelines:

- Trigger automated test runs

- Report test results to pipeline

- Gate deployments based on quality

- Update build status with findings

- Integrate with deployment workflows

Bug Tracking Systems:

- Create defect tickets automatically

- Update issue status and assignments

- Link bugs to failed tests

- Add diagnostic information and logs

- Track resolution progress

Reporting Platforms:

- Generate quality dashboards

- Publish test metrics and KPIs

- Create executive summaries

- Distribute notifications and alerts

- Archive historical reports

Automation Triggers

Event-Based Activation:

- Code commits trigger test generation

- Pull requests initiate validation

- Deployments activate smoke tests

- Threshold violations start investigation

- Schedule-based regression execution

Notification Mechanisms:

- Email alerts for critical failures

- Slack messages for team updates

- Dashboard updates for stakeholders

- Mobile notifications for urgent issues

- Webhook calls to external systems

Incident Handling:

- Automated ticket creation for failures

- Escalation based on severity and urgency

- Assignment to appropriate team members

- Status tracking until resolution

- Post-mortem data collection

Transparency and Compliance

Audit Trails:

- Log all QA agent actions with timestamps

- Record decision reasoning and data used

- Track configuration changes over time

- Maintain version history for models

- Document human interventions and overrides

Decision Logs:

- Explain why specific actions taken

- Show confidence scores for decisions

- Link to supporting evidence and data

- Highlight uncertainty and alternatives considered

- Enable reproducibility for review

Compliance Support:

- Demonstrate regulatory requirement coverage

- Provide evidence for audit requests

- Track data privacy and security practices

- Document approval workflows

- Maintain retention policies

Step 7: Deploy and Monitor with MLOps Best Practices

Ensure reliable operation of agentic testing systems:

CI/CD for QA Agents

Build Pipeline:

- Automated testing of agent code

- Model validation and performance checks

- Integration testing with target systems

- Security scanning for vulnerabilities

- Package creation and versioning

Deployment Process:

- Canary deployments testing with subset

- Blue-green deployments for zero downtime

- Feature flags controlling agent capabilities

- Rollback mechanisms for issues

- Staged rollout to production

Update Management:

- Model retraining and validation

- Configuration updates without downtime

- Backward compatibility maintenance

- Version control for all artifacts

- Change documentation and approval

Runtime Monitoring

Behavior Tracking:

- Monitor agent decisions and actions

- Detect anomalous behavior patterns

- Track performance against baselines

- Identify drift in model predictions

- Alert on unexpected outcomes

System Health:

- Resource utilization monitoring

- API rate limits and quotas

- Error rates and failure patterns

- Latency and response times

- Integration health checks

Quality Metrics:

- Test coverage trends over time

- Defect detection effectiveness

- False positive and negative rates

- Maintenance effort reduction

- Business impact measurements

Scalability Infrastructure

Containerization:

- Docker containers for consistent deployment

- Kubernetes orchestration for scaling

- Resource limits and quotas enforcement

- Health checks and auto-recovery

- Load balancing across instances

Cloud and Edge Computing:

- Cloud platforms for elastic scaling

- Edge deployment for low-latency responses

- Hybrid architectures for flexibility

- Multi-region redundancy for reliability

- Cost optimization through auto-scaling

Step 8: Iterate and Scale

Expand agentic testing capabilities systematically:

Start with Pilot Tasks

Initial Scope:

- Select 2-3 high-value repetitive tasks

- Focus on clear, well-defined problems

- Choose areas with available data

- Target measurable success criteria

- Ensure manageable complexity

Validation Approach:

- Run QA agent alongside human testers initially

- Compare agent and human results

- Measure time savings and accuracy

- Gather feedback from users

- Iterate based on learnings

Gradual Expansion

Scope Growth:

- Add related tasks leveraging existing capabilities

- Expand to additional application areas

- Increase autonomy as confidence builds

- Handle more complex scenarios progressively

- Extend to additional teams and projects

Capability Enhancement:

- Improve model accuracy through data

- Add new decision-making capabilities

- Integrate additional data sources

- Enhance reasoning sophistication

- Expand action repertoire

Multi-Agent Orchestration

Agent Collaboration:

- Specialized QA agents for different tasks

- Coordination protocols between agents

- Shared knowledge and learning

- Load balancing across agent pool

- Hierarchical agent structures

Agentic Testing Ecosystems:

- Test generation agents creating scenarios

- Execution agents running validations

- Analysis agents triaging results

- Maintenance agents fixing issues

- Reporting agents communicating findings

Governance Frameworks

Ethical AI:

- Fairness in test prioritization

- Bias detection and mitigation

- Transparency in decision-making

- Accountability for agent actions

- Human oversight requirements

Compliance Assurance:

- Regulatory requirement adherence

- Data privacy protection

- Security best practices

- Quality standards maintenance

- Documentation requirements

Real-World Example Use Cases

Automated Test Script Generation and Self-Healing

Scenario: E-commerce platform with frequent UI changes

QA Agent Implementation:

- Monitor code repository for frontend changes

- Generate test scripts covering modified components

- Execute tests across browser combinations

- Self-heal when element locators change

- Report results to test management system

Business Impact:

- Test creation time reduced 80%

- Maintenance effort dropped 70%

- Coverage increased across all features

- Release cycle shortened by 40%

- Team capacity redirected to exploratory testing

Dynamic Defect Triage and Predictive Risk Analytics

Scenario: Financial services application with complex codebase

Agentic Testing Approach:

- Analyze failed test results automatically

- Categorize defects by type and severity

- Assign bugs based on code change analysis

- Predict high-risk modules before testing

- Prioritize testing effort appropriately

Outcomes:

- Defect triage time reduced 90%

- Assignment accuracy improved to 95%

- Production incidents decreased 60%

- Testing efficiency increased 50%

- Developer satisfaction improved significantly

Intelligent Regression Testing and Continuous Monitoring

Scenario: SaaS platform with daily deployments

QA Agent Solution:

- Select relevant regression tests per deployment

- Execute tests on every code commit

- Monitor production for anomalies

- Generate alerts for performance degradation

- Automatically rollback problematic deployments

Results:

- Testing time per deployment reduced 75%

- Deployment confidence increased substantially

- Production issues caught before user impact

- Release frequency doubled safely

- Infrastructure costs optimized through intelligent selection

Tools and Frameworks to Consider

AI Agent Development Frameworks

LangChain:

- Framework for LLM-powered QA agents

- Chain complex reasoning workflows

- Integrate with various data sources

- Memory management for context

- Tool calling and action execution

n8n:

- No-code workflow automation platform

- Visual workflow design for agentic testing

- Extensive integration library

- Conditional logic and branching

- API and webhook support

Robylon:

- AI agent builder for automation

- Pre-built templates for common tasks

- Natural language programming

- Cloud-based execution

- Collaboration features

CrewAI:

- Multi-agent orchestration framework

- Role-based agent design

- Task delegation and coordination

- Collaborative problem-solving

- Python-native implementation

Specialized Testing Platforms

KaneAI:

Purpose-built for intelligent and agentic test orchestration

- Natural language test authoring powered by AI

- Agentic testingworkflows driven by autonomous QA agents

- Self-healing automation that adapts to UI and API changes

- Comprehensive platform integration across CI/CD, cloud, and real devices

Open-Source and Commercial Options

Open-Source Libraries:

- TensorFlow and PyTorch for model building

- scikit-learn for classical ML algorithms

- Transformers library for LLM integration

- OpenAI Gym for reinforcement learning

- MLflow for experiment tracking

Commercial Platforms:

- Cloud provider AI services (AWS, Azure, GCP)

- Testing platforms with AI capabilities

- MLOps platforms for deployment

- Monitoring and observability tools

- Data pipeline orchestration systems

Conclusion

Building intelligent QA agents transforms manual, repetitive tasks into scalable, autonomous workflows that deliver superior quality assurance outcomes through agentic testing approaches.

Following proper design principles ensures QA agents understand their purpose clearly, perceive environments accurately, reason intelligently about problems, execute actions safely, and learn continuously from experience.

Deployment and monitoring best practices guarantee reliable operation, while iterative expansion enables organizations to scale agentic testing capabilities progressively from pilot tasks to comprehensive autonomous quality assurance.

Organizations investing in intelligent QA agents position themselves for sustained competitive advantage through superior software quality delivered rapidly, reduced testing costs freeing resources for innovation, and the organizational agility to respond to changing business needs while maintaining comprehensive quality validation that users